As part of our current work on investigating trends in analytics and in contextualising it to post-compulsory education – which we are calling our Analytics Reconnoitre – I attended the Teradata Universe Conference recently. Teradata Universe is very much not an academic conference; this was a trip to the far side of the moon, to the land of corporate IT, grey-suits galore and a dress code…

Before giving some general impressions and then following with some more in-depth reflections and arising thoughts, I should be clear about the terms “analytics” and “big data”.

My working definition for Analytics, which I will explain in more detail in a forthcoming white paper and associated blog post is:

“Analytics is the process of developing actionable insights through problem definition and the application of statistical models and analysis against existing and/or simulated future data.”

I am interpreting Big Data as being data that is at such a scale that conventional databases (single server relational databases) can no longer be used.

Teradata has a 30 year history of selling and supporting Enterprise Data Warehouses so it should not have been a surprise that infrastructure figured in the conference. What was surprising was the degree to which infrastructure (and infrastructural projects) figured compared to applications and analytical techniques. There were some presentations in which brief case studies outlined applications but I did not hear any reference to algorithmic, methodological, etc development nor indeed any reference to any existing techniques from the data mining (a.k.a. “knowledge discovery in databases”) repertoire.

My overall impression is that the corporate world is generally grappling with pretty fundamental data management issues and generally focused on reporting and descriptive statistics rather than inferential and predictive methods. I don’t believe this is due to complacency but simply to the reality of where they are now. As the saying goes “if I was going there, I wouldn’t start here”.

The Case for “Data Driven Decisions”

Erik Brynjolfsson, Director of the MIT Center for Digital Business, gave an interesting talk entitled “Strength in Numbers: How do Data-Driven Decision-Making Practices affect Performance?”

The phrase “data driven decisions” raises my hackles since it implies automation and the elimination of the human component. This is not an approach to strive for. Stephen Brobst, Teradata CTO, touched on this issue in the last plenary of the conference when he asserted that “Sucess = Science + Art” and backed up the assertion with examples. Whereas my objections to data driven decisions revolve around the way I anticipate such an approach would lead to staff alientation and significant disruption to effective working of an organisational, Brobst was referring to the pitfall trap of incremental improvement leading to missed opportunities for breakthrough innovation.

As an example of a case where incremental improvement found a locally optimal solution but a globally sub-optimal one, Brobst cited actuarial practice in car insurance. Conventionally, risk estimation uses features of the car, the driver’s driving history and location and over time the fit between these parameters and statistical risk has been honed to a fine point. It turns out that credit risk data is actually a substantially better fit to car accident risk, a fact that was first exploited by Progressive Insurance back in 1996.

Rather than “data driven decisions”, I advocate human decisions supported by the use of good tools to provide us with data-derived insights. Paul Miller argues the same case against just letting the data speak for itself on his “cloud of data” blog.

This is, I should add, something Brynjolfsson and co-workers also advocate; they are only adopting terminology from the wider business world. See, for example an article in The Futurist (Brynjolfsson, Erik and McAfee, Andrew, “Thriving in the Automated Economy” The Futurist, March-April 2012.). In this article, Brynjolfsson and McAfee make the case for partnering humans and machines throughout the world of work and leisure. They cite an interesting example of the current best chess “player” in the world, which is 2 amateur American chess players using 3 computers. They go on to make some specific recommendations to try to make sure that we avoid some socio-economic pathologies that might arise from a humans vs technology race (as opposed to humans with machines), although not everyone will find all the recommendations ethically acceptable.

To return to the topic of Brynjolfsson’s talk, which is expanded in a paper of the same title (Brynjolfsson, Erik, Hitt, Lorin and Kim, Heekyung “Strength in Numbers: How Does Data-Driven Decisionmaking Affect Firm Performance”, April, 2011). The abstract:

“We examine whether performance is higher in firms that emphasize decisionmaking based on data and business analytics (which we term a data-driven decisionmaking approach or DDD). Using detailed survey data on the business practices and information technology investments of 179 large publicly traded firms, we find that firms that adopt DDD have output and productivity that is 5-6% higher what would be expected given their other investments and information technology usage. Using instrumental variables methods, we find evidence that these effects do not appear to be due to reverse causality. Furthermore, the relationship between DDD and performance also appears in other performance measures such as asset utilization, return on equity and market value. Our results provide some of the first large scale data on the direct connection between data-driven decisionmaking and firm performance.”

This is an important piece of research, adding to a relatively small existing body – which shows correlation between high levels of analytics use and factors such as growth (see the paper) – and one which I have no doubt will be followed up. They have taken a thorough approach to the statistics of correlation and tested for reverse causation. The limitations of the conclusion is clear from the abstract, however in “large publicly traded firms”. What of smaller firms? Futhermore, business sector (industry) is treated as a “control” but my hunch is that the 5-6% figure conceals some really interesting variation. The study also fails to establish mechanism, i.e. to demonstrate what it is about the context of firm A and the interventions undertaken that leads to enhanced productivity etc. These kinds of issues with evaluation in the social sciences are the subject of writings by Nick Tilley and Ray Pawson (see for example, “Realistic Evaluation: An Overview“) which I hold in high regard. My hope is that future research will attend to these issues. For now we must settle for less complete, but still useful, knowledge.

I expect that as our Analytics Reconnoitre proceeds we will return to this and related research to explore further whether any kind of business case for data-driven decisions can be robustly made for Higher or Further Education, or whether we need to gather more evidence by doing. I suspect the latter to be the case and that for now we will have to resort to arguments on the basis of analogy and plausibility of benefits.

Zeitgeist: Data Scientists

“Data Scientist” is a term which seems to be capturing the imagination in the corporate big data and analytics community but which has not been much used in our community.

A facetious definition of data scientist is “a business analyst who lives in California”. Stephen Brobst gave his distinctions between data scientist and business analyst in his talk. His characterisation of a business analyst is someone who: is interested in understanding the answers to a business question; uses BI tools with filters to generate reports. A data scientist, on the other hand, is someone who: wants to know what the question should be; embodies a combination of curiosity, data gathering skills, statistical and modelling expertise and strong communication skills. Brobst argues that the working environment for a data scientist should allow them to self-provision data, rather than having to rely on what is formally supported in the organisation, to enable them to be inquisitive and creative.

Michael Rappa from the Institute for Advanced Analytics doesn’t mention curiosity but offers a similar conception of the skill-set for a data scientist in an interview in Forbes magazine. The Guardian Data Blog has also reported on various views of what comprises a data scientist in March 2012, following the Strata Conference.

While it can be a sign of hype for new terminology to be spawned, the distinctions being drawn by Brobst and others are appealing to me because they are putting space between mainstream practice of business analysis and some arguably more effective practices. As universities and colleges move forward, we should be cautious of adopt the prevailing view from industry – the established business analyst role with a focus on reporting and descriptive statistics – and miss out on a set of more effective practices. Our lack of baked-in BI culture might actually be a benefit if it allows us to more quickly adopt the data scientist perspective alongside necessary management reporting. Furthermore, our IT environment is such that self-provisioning is more tractable.

Experimentation, Culture and HiPPOs

Like most stereotypes, the HiPPO is founded on reality; this is decision-making based on the Highest Paid Person’s Opinion. While it is likely that UK universities and colleges are some cultural distance from the world of corporate America that stimulated the coining of “HiPPO”, we are certainly not immune from decision-making on the basis of management intuition and anecdote suggests that many HEIs are falling into more autocratic and executive style management in response to a changing financial regime. As a matter of pride, though, academia really should try to be more evidence-based.

Avanish Kaushik (Digital Marketing Evangelist at Google) talked of HiPPOs and data driven decision making (sic) culture back in 2006 yet in 2012 these issues are still main stage items at Teradata 2012. Cultural inertia. In addition to proposing seven steps to becoming more data-driven, Kaushik’s posting draws the kind of distinctions between reporting and analysis that accords with the business analyst vs data scientist distinctions, above.

Stephen Brobst’s talk – “Experimentation is the Key to Business Success” – took a slightly different approach to challenging the HiPPO principle. Starting from an observation that business culture expects its leadership to have the answers to important and difficult questions, something even argumentative academics can still be found to do, Brobst argued for experimentation to acquire the data necessary for informed decision-making. He gained a further nod from me by asserting that the experiment should be designed on the basis of theorisation about mechanism (see earlier reference to the work of Tilley and Paulson).

Proctor and Gamble’s approach to pricing a new product by establishing price elasticity through a set of trial markets with different price points is one example. It is hard to see this being tractable for fee-setting in most normal courses in most universities but maybe not for all and it becomes a lot more realistic with large-scale distance education. Initiatives like coursera have the opportunity to build-out for-fee services with much better intelligence on pricing than mainstream HE can dream of.

Big Data and Nanodata Velocity

There is quite a lot of talk about Big Data – data that is at such a scale that conventional databases can no longer be used – but I am somewhat sceptical that the quantity of talk is merited. One presenter at Teradata Universe actually proclaimed that big data was largely an urban myth but this was not the predominant impression; others boasted about how many petabytes of data they had (1PB = 1,000TB = 1,000,000GB). There seems to be an unwarranted implication that big data is necessary for gaining insights. While it is clear that more data points improves the statistical significance and that if you have a high volume of transactions/interactions then even small % improvements can have significant bottom line value (e.g. a 0.1 increase in purchase completion at Amazon), there remains a great deal of opportunity to be more analytical in the way decisions are made using smaller scale data sources. The absence of big data in universities and colleges is an asset, not an impediment.

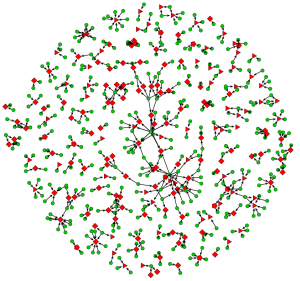

Erik Brynjolfsson chose the term “nanodata” to draw attention to the fine-grained components of most Big Data stores. Virtually all technology-mediated interactions are capable of capturing such “nanodata” and many do. The availability of nanodata is, of course, one of the key drivers of innovation in analytics. Brynjolfsson also pointed to data “velocity”, i.e. the near-real-time availability of nanodata.

The insights gained from using Google search terms to understand influenza is a fairly well-known example of using the “digital exhaust” of our collective activities to short-cut traditional epidemiological approaches (although I do not suggest it should replace them). Brynjolfsson cited a similar approach used in work with former co-worked Lynn Wu on house prices and sales (pdf), which anticipated official figures rather well. The US Federal Reserve Bank, we were told, was envious.

It has taken a long time to start to realise the vision of Cybersyn. Yet still our national and institutional decision-making relies on slow-moving and broadly obsolete data; low velocity information is tolerated when maybe it should not be. In some cases the opportunities from more near-real-time data may be neglected low-hanging fruit, and it doesn’t necessarily have to be Big Data. Maybe there should be talk of Fast Data?

Data Visualisation

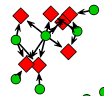

Stephen Few, author and educator on the topic of “visual business intelligence”, gave both a keynote and a workshop that could be very concisely summarised as a call to: 1) take more account of how human perception works when visualising data; 2) make more use of visualisation for sense-making. Stephen Brobst (Teradata CTO) made the latter point too: that data scientists use data visualisation tools for exploration, not just for communication.

Few gave an accessible account of visual perception as applied to data visualisation with some clear examples and reference to cognitive psychology. His “Perceptual Edge” website/blog covers a great deal of this – see for example “Tapping the Power of Visual Perception” (pdf) – as does his accessible book, “Now You See It“. I will not repeat that information here.

His argument that “visual reasoning” is powerful is easily demonstrated by comparing what can be immediately understood from the right kind of graphical presentation with tabulation of the data. The point that visual reasoning usually happens transparently (subconsciously) and hence that we need to guard against visualisation techniques that mislead, confuse of overwhelm.

I did feel that he advocated visual reasoning beyond the point at which it is reliable by itself. For example, I find parallel coordinates quite difficult. I would have liked to see more emphasis on visualising the results of statistical tests on the data (e.g. correlation, statistical significance) particularly as I am a firm believer that we should know of the strength of an inference before deciding on action. Is that correlation really significant? Are those events really independent in time?

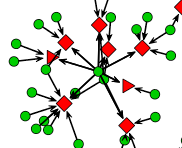

Few’s second key point – about the use of data visualisation for sense-making – began with claims that the BI industry has largely failed to support it. He summarised the typical pathway for data as: collect > clean > transform > integrate > store > report. At this point there is a wall, Few claims, that blocks a productive sense-making pathway: explore > analyse > communicate > monitor > predict.

Visualisation tools tend to have been created with before-the-wall use cases, to be about the plot in the report. I rather agree with Few’s criticism that such tool vendors tend to err towards a “bling your graph” feature-set or flashy dashboards but there is hope in the form of tools such as Tibco Spotfire and Tableau, while Open Source afficionados or the budget-less can use ggobi for complex data visualisation, Octave or R (among others). The problem with all of these is complexity; the challenge to visualistion tool developers is to create more accessible tools for sense-making. Without this kind of advance it requires too much skill acquisition to move beyond reporting to real analytics and that limits the number of people doing analytics that an organisation can sustain.

It is worth noting that “Spotfire Personal” is free for one year and that “Tableau Public” is free and intended to let data-bloggers et al publish to their public web servers, although I have not yet tried them.

Analytics & Enterprise Architecture

The presentation by Adam Gade (CIO of Maersk, the shipping company) was ostensibly about their use of data but it could equally have been entitled “Maersk’s Experiences with Enterprise Architecture”. Although at no point did Gade utter the words “Enterprise Architecture” (EA), many of the issues he raised have appeared in talks at the JISC Enterprise Architecture Practice Group: governance, senior management buy-in, selection of high-value targets, tactical application, … etc. It is interesting to note that Adam Gade has a marketing and sales background – not the norm for a CIO – yet seems to have been rather successful; maybe he could sell the idea internally?

The link between EA and Analytics is not one which has been widely made (in my experience and on the basis of Google search results) but I think it is an important one which I will talk of a little more in a forthcoming blog post, along with an exploration of the Zachman Framework in the context of an analytics project. It is also worth noting that one of the enthusiastic adopters of our ArchiMate (TM) modelling tool, “Archi“, is Progressive Insurance which established a reputation as a leader in putting analytics to work in the US insurance industry (see, for example the book Analytics at Work, which I recommend, and the summary from Accenture, pdf).

Adam Gade also talked of the importance of “continuous delivery”, i.e. that analytics or any other IT-based projects start demonstrating benefits early rather than only after the “D-Day”. I’ve come across a similar idea – “time to value” – being argued for as being more tactically important than return on investment (RoI). RoI is, I think, a rather over-used concept and a rather poor choice if you do not have good baseline cost models, which seems to be the case in F/HEIs. Modest investments returning tangible benefits quickly seems like a more pragmatic approach than big ideas.

Conclusions – Thoughts on What this Means for Post-compulsory Education

For all the general perception is that universities and colleges are relatively undeveloped in terms of putting business intelligence and analytics to good use, I think there are some important “but …” points to make. The first “but” is that we shouldn’t measure ourselves against the most effective users from the commercial sector. The second is that the absence of entrenched practices means that there should be less inertia to adopting the most modern practices. Third, we don’t have data at the scale that forces us to acquire new infrastructure.

My overall impression is that there is opportunity if we make our own path, learning from (but not following) others. Here are my current thoughts on this path:

Learn from the Enterprise Architecture pioneers in F/HE

Analytics and EA are intrinsically related and the organisational soft issues in adopting EA in F/HE have many similarities to those for adopting analytics. One resonant message from the EA early adopters, which can be adapted for analytics, was “use just enough EA”.

Don’t get hung up on Big Data

While Big Data is a relevant technology trend, the possession of big data is not a pre-requisite for making effective use of analytics. The fact that we do not have Big Data is a freedom not a limitation.

Don’t focus on IT infrastructure (or tools)

Avoid the temptation (and sales pitches) to focus on IT infrastructure as a means to get going with analytics. While good tools are necessary, they are not the right place to start.

Develop a culture of being evidence-based

The success of analytics depends on people being prepared to critically engage with evidence based on data (including its potential weaknesses or bias and to avoid being over-trusting of numbers) and to take action on the analysis rather then being slaves to anecdote and the HiPPO. This should ideally start from the senior management. “In God we trust, all others bring data” (probably mis-attributed to W. Edwards Deming).

Experiment with being more analytical at craft-scale

Rather than thinking in terms of infrastructure or major initiatives, get some practical value with the infrastructure you have. Invest in someone with “data scientist” skills as master crafts-person and give them access to all data but don’t neglect the value of developing apprentices and of developing wider appreciation of the capabilities and limitations of analytics.

Avoid replicating the “analytics = reporting” pitfall

While the corporate sector fights its way out of that hole, let us avoid following them into it.

Ask questions that people can relate to and that have efficiency or effectiveness implications

Challenge custom and practice or anecdote on matters such as: “do we assess too much?”, “are our assessment instruments effective and efficient?”, “could we reduce heating costs with different use of estate?”, “could research groups like mine gain greater REF impact through publishing in OA journals?”, “how important is word of mouth or twitter reputation in recruiting hard-working students?”, “can we use analytics to better model our costs?”

Look for opportunities to exploit near-real-time data

Are decisions being made on old data, or no changes being made because the data is essentially obsolete? Can the “digital exhaust” of day-to-day activity be harnessed as a proxy for a measure of real interest in near-real-time?

Secure access to sector data

Sector organisations have a role to play in making sure that F/HEIs have access to the kind of external data needed to make the most of analytics. This might be open data or provisioned as a sector shared service. The data might be geospatial, socio-economic or sector-specific. JISC, HESA, TheIA, LSIS and others have roles to play.

Be open-minded about “analytics”

The emerging opportunities for analytics lie at the intersection of practices and technologies. Different communities are converging and we need to be thinking about creative borrowing and blurring of boundaries between web analytics, BI, learning analytics, bibliometrics, data mining, … etc. Take a wide view.

Collaborate with others to learn by doing

We don’t yet know the pathway for F/HE and there is much to be gained from sharing experiences in dealing with both the “soft” organisational issues and the challenge of selecting and using the right technical tools. While we may be competing for students or research funds, we will all fail to make the most from analytics and to effectively navigate the rapids of environmental factors if we fail to collaborate; competitive advantage is to be had from how analytics is applied but that can only occur if capability exists.